The Local Outlier Factor (LOF) is an unsupervised algorithm to detect outliers in your dataset. LOF detects outliers based on the local deviation of the density from an sample compared to the samples neighbors. The local density is calculated by the distance between the sample to its surrounding neighbors (k-nearest neighbors). Outliers are samples that have substantially lower density then the surrounding neighbors.

Program code in GitHub repository

As always you find the whole Jupyter notebook that is used to create this article in my GitHub repository.

Implementation of the Local Outlier Factor Algorithm

For the implementation of the LOF algorithm, we use the sklearn library.

The following section shows how to build the program code based on the Boston House Prices Kaggle dataset. First we must get rid of all missing values in the dataset and save all numeric features to a new list. Only the numeric features are selected to fit to the algorithm. With the negative_outlier_factor_ function of the Local Outlier Factor object we get the opposite of the local outlier factor for each sample. Outliers have a high negative outlier factor. In my example I choose a threshold of lower than -1.5 to declare a sample as outlier.

import pandas as pd

from sklearn.neighbors import LocalOutlierFactor

df = pd.read_csv(

filepath_or_buffer = '../train_bostonhouseprices.csv',

index_col = "Id"

)

# drop all columns with a lot of missing values

df = df.drop(["Alley", "PoolQC", "Fence", "MiscFeature", "FireplaceQu"], axis='columns')

# drop all rows with missing values

df = df.dropna(axis='index')

col_numeric = list(df.drop("SalePrice", axis='columns').describe())

clf = LocalOutlierFactor()

clf.fit_predict(df[col_numeric])

# outlier have a larger negative value

df["negative_outlier_factor"] = clf.negative_outlier_factor_

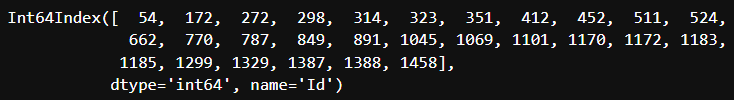

df[df.negative_outlier_factor < -1.5].indexThe output of this script are the indexes that are labeled as outliers and should be further analyzed to maybe drop the samples from the dataset.